|

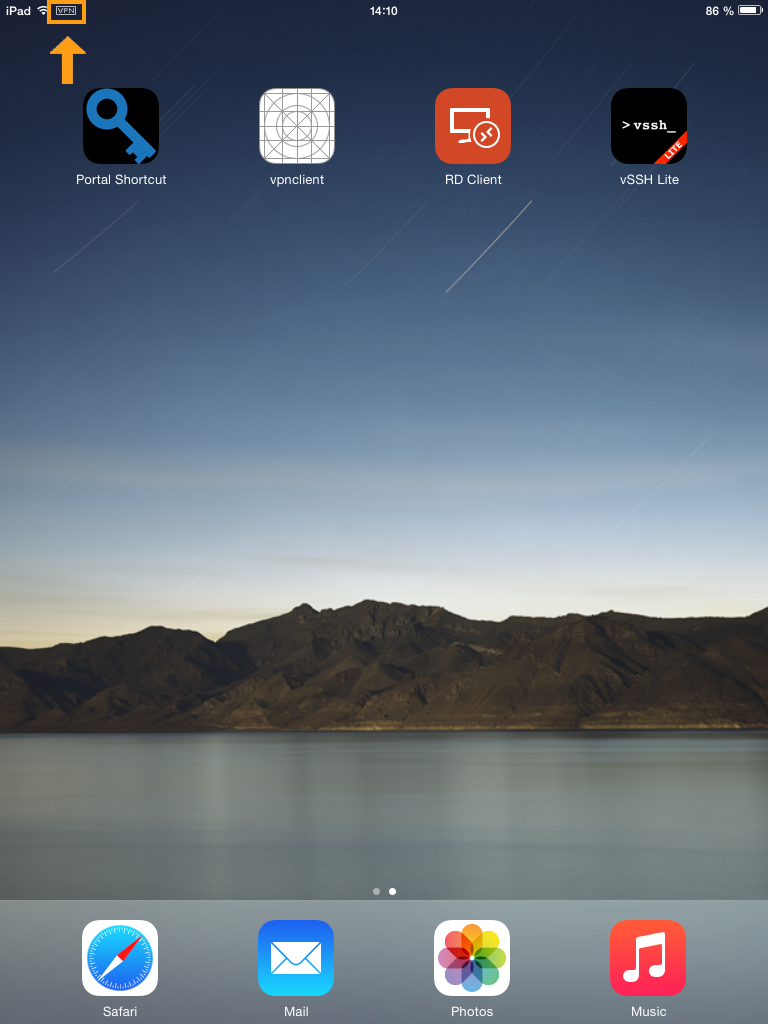

11/24/2023 0 Comments Cudalaunch nvprof Returns: cudaSuccess, cudaErrorInvalidDeviceFunction, cudaErrorInvalidConfiguration, cudaErrorLaunchFailure, cudaErrorLaunchTimeout, cudaErrorLaunchOutOfResources, cudaErrorSharedObjectInitFailed Note: Note that this function may also return error codes from previous, asynchronous launches. Device char string naming device function to execute cudaLaunch() must be preceded by a call to cudaConfigureCall() since it pops the data that was pushed by cudaConfigureCall() from the execution stack. The parameter specified by entry must be declared as a _global_ function. For a visual overview of these results, you can use the NVIDIA Visual Profiler (nvvp):Note however that both nvprof and nvvp are deprecated, and will be removed from future versions of the CUDA toolkit. The parameter entry must be a character string naming a function that executes on the device. Hampering further research and performance improvements in frameworks, such as Although cuDNN, NVIDIA's deep learning library, canĪccelerate performance by around 2x, it is closed-source and inflexible, For example, default implementations in TensorflowĪnd MXNet invoke many tiny GPU kernels, leading to excessive overhead in Implementations of LSTM RNN in machine learning frameworks usually either lack State-of-the-art (SOTA) model for analyzing sequential data.Launches the function entry on the device. Since my 38GB cloud disk only has 6 GB available, nvprof crashes. PyTorch, that use cuDNN as their backend. The second nvprof needs to write a 12GB temporary file to /tmp before it can proceed. Implementation called EcoRNN that is significantly faster than the SOTA Here is the result checked with dev: In the next CUDA Toolkit release we are planning a nvprof enhancement to support combined metrics and tracing output. We show that (1) fusing tiny GPU kernels and (2) applying data layout Open-source implementation in MXNet and is competitive with the closed-sourceĬuDNN. Optimization can give us a maximum performance boost of 3x over MXNet defaultĪnd 1.5x over cuDNN implementations. We integrateÄ®coRNN into MXNet Python library and open-source it to benefit machine learning Our optimizations also apply to other RNNĬell types such as LSTM variants and Gated Recurrent Units (GRUs). LSTM RNN is one of the most important machine learning models for analyzing sequential data today, having applications in language modeling, machine translation, and speech recognition Äespite its importance, LSTM RNN training has been shown to have much lower throughput on GPUs compared to other types of networks such as Convolutional Neural Networks (CNNs).  also suggests that LSTM RNN has low compute utilization and is limited by GPU memory capacity. The reason for this low utilization of LSTM RNNs compared with CNNs lies in the difference between their high-level structure and the types of dominant layers (operators) used in their computation. From a high-level perspective, the computation graph of LSTM RNN exhibits a recurrent structure that processes one input at a time, limiting the amount of model parallelism. From a low-level perspective, LSTM RNNs and CNNs use different sets of layers (operators). If all available instances cannot be profiled due to hardware limitation then nvprof gives following warning: 14882 Warning: The following aggregate event values were extrapolated from. Looking at the command-line help, I see a -devices switch which appears to limit at least some functions to use only particular GPUs.  myscript.py Also, nvprof is documented and also has command line help via nvprof -help. While CNNs extensively use convolutions, relu activations and poolings, LSTM RNNs mostly use fully-connected layers, tanh/sigmoid activations, and element-wise operations. In nvprof, by default the events are profiled for all instances that can be profiled and the data is extrapolated for all available instances. CUDAVISIBLEDEVICES'0' nvprof -profile-child-processes python. These fundamental differences limit the applicability of prior work in reducing memory footprint of CNN training (e.g., vDNN, Gist ) in the context of LSTM RNNs. 149.37ms 23.737us cudaLaunch (void matrixMulCUDA(float, float.In this work, we focus on LSTM RNN training, which is different from inference in the following major ways: (1) Compute: In training, there is an extra backward pass that propagates gradients back from the loss layer. The NVIDIA Visual Profiler and the command-line profiler, nvprof, now support. The performance of training is characterized by throughput (number of training samples processed per second) instead of latency that is usually used in inference. Therefore, data samples are usually batched together before feeding into the neural networks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed